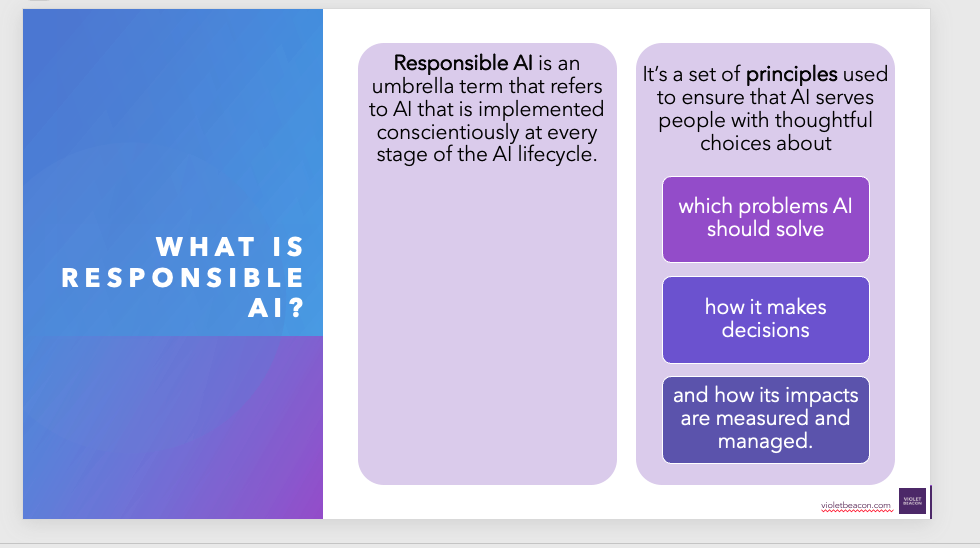

What Is Responsible AI?

Responsible AI refers to AI that is built conscientiously and responsibly at every stage of the AI lifecycle. It’s a set of principles that can used to ensure that AI serves people with thoughtful choices about which problems AI should solve, how it makes decisions, and how its impacts are measured and managed.

Benefits of Responsible AI

Minimizing risk is one of its most practical and immediate benefits of implementing responsible AI. By implementing responsible AI practices—like transparency, explainability, human oversight, data integrity, and ethical governance—you will actively be doing these things:

Understanding AI: Software, Hardware, and Data

To use AI responsibly, it is important to understand how it functions in practice. AI is an ecosystem made up of three essential components:

Software

Algorithms, AI models, tools, applications, and user interfaces that generate, process, and interpret outputs.

Hardware

The computing infrastructure that powers AI systems. This includes servers, GPUs, mobile devices, and edge devices.

Data

The raw material AI learns from and makes decisions with. The quality, diversity, and responsible sourcing of this data heavily influence the outcomes.

By understanding how these parts interact, we can make more informed choices about which tools to use, how to deploy them, and where to apply oversight.

Ways We Can Support Your Team

With over 20 years in software and technical consulting, we’re a trusted partner for teams looking to simplify systems, adopt AI responsibly, and save time without adding complexity. Our services are designed to meet real needs with practical, steady support.

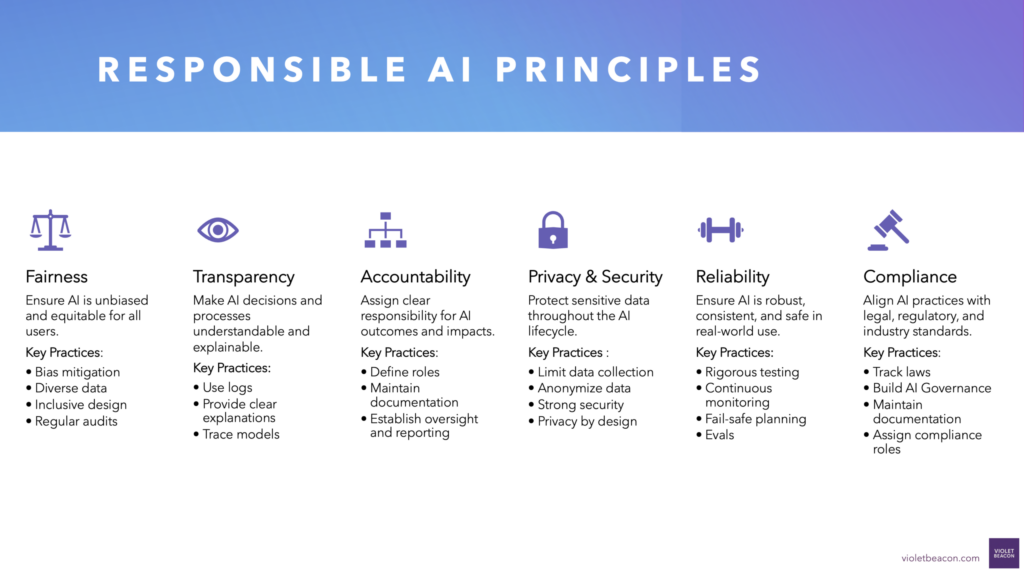

Transparency and Explainability

Disclose when and where AI is being used, particularly in client-facing contexts. Maintain meaningful human oversight and ensure that AI-generated content is subject to thoughtful review. Design user experiences that encourage users to reflect on and verify outputs rather than accept them blindly. When AI is used in decision-making, especially in high-impact or regulated areas, it is essential that outputs are explainable. People should be able to understand how and why the AI reached its conclusions to support both transparency and accountability.

Model Choice and Alignment

Research and select AI models that reflect your company’s values and objectives. Consider the implications of open-source versus proprietary models, and pay close attention to security, scale, and hidden system constraints. Be cautious of models that mimic authority or consistently agree with users, as these traits can lead to misplaced trust.

Guardrails and Safety

Establish safety checks and audits for AI tools. Review outputs for factual accuracy and potential bias. Monitor for prompt injection vulnerabilities and ensure that models are regularly evaluated for behavior drift or unintended consequences.

Security and Privacy

Implement best practices to protect user data. Avoid exposing sensitive or personally identifiable information to untrusted systems. Choose secure platforms with robust memory management and access controls to prevent data leakage.

Thoughtful Deployment

Ask important questions before adopting AI. Will this tool support human growth or replace valuable human effort? What long-term risks or losses might it introduce? Are the suggestions it generates safe, responsible, and aligned with your culture?

Oversight and Governance

Establishing responsible AI practices requires collaboration and shared accountability. One effective strategy is to form dedicated groups or oversight committees tasked with both strategic and operational governance of AI.

Key responsibilities of these groups may include:

- Identifying Opportunities: Evaluate which areas of the organization are most appropriate for AI adoption and ensure alignment with business and ethical goals.

- Developing Governance Protocols: Define guidelines for safe, fair, and transparent AI use, including data policies, approval workflows, and human review standards.

- Monitoring Implementation: Oversee the actual deployment of AI tools to ensure they are used appropriately, securely, and in line with agreed-upon standards.

- Evaluating Impact: Regularly assess the outcomes of AI adoption, measuring performance, identifying unintended consequences, and addressing them proactively.

- Fostering Education and Buy-In: Build a culture of responsible use by offering ongoing training and inviting diverse voices into the conversation across teams, roles, and perspectives.

These oversight bodies should reflect a mix of technical, operational, ethical, and user-centered expertise. Their purpose is to ensure that AI not only works but works in a way that builds trust, minimizes harm, and supports long-term organizational resilience.

Risks to Monitor

Responsible AI adoption requires proactive attention to the unintended consequences that may arise. Common risks include:

- Overreliance on Automation: When AI becomes a crutch, critical thinking and skills may atrophy.

- Hallucinations and Misinformation: AI may deliver plausible but incorrect outputs with confidence.

- Bias Amplification: AI trained on flawed data can reinforce systemic inequities.

- Privacy Breaches: Improper data handling can expose sensitive or regulated information.

- Reduced Accountability: Without transparency and audit trails, it becomes difficult to trace harmful outcomes.

- Misleading User Experience: When AI appears too humanlike or authoritative, users may defer to its judgment without scrutiny.

- Tool Drift and Unintended Use: AI may evolve in unexpected ways or be used outside its intended context, creating risk.

Mitigating these risks involves careful planning, continuous testing, clear governance policies, and ongoing human involvement in oversight and review.